Drumroll

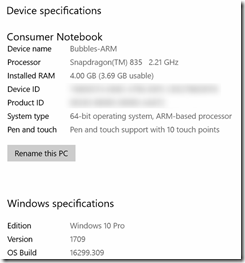

I have been asked to evaluate a prototype Windows 10 on ARM PC. You might have seen people talk about it earlier, like my friend Lance who wrote about his one day developer experience, Daren May has something to say about remote debugging with these devices, and wouldn’t you know - on the first day of spring, one sprung up at to Paul Thurrott’s site. I am not sure if that’s exactly the same model as I have – it looks pretty similar, but that’s actually not important. As far as Windows goes, the platform and what it can do is more interesting to me than the actual underlying hardware. Windows goes ARM – yet again, one might say.

Wait, haven’t we seen this before?

Windows has been running on ARM before, both on tablets, phones and IoT devices like a Raspberry PI. Windows RT was an Windows 8 variant, Windows Mobile made it actually to Windows 10, IoT devices run a super compact version of Windows 10 and UWP apps. In all cases, apps on Windows versions that run on ARM devices could only be native (ARM) apps. For a number of use cases, the backward compatibility with the vast library of Windows apps that have been created over the years posed a bit of a challenge. And that’s where some brand new tech comes in. The new Windows 10 on ARM runs actual x86 code, made for the ‘conventional’ Intel chips - converting it on the fly. It uses a technology that’s called CHPE (pronounced “chip-pee”) to work the magic. Lance’s article has a nice in-depth explanation of it. I talked to people working on that CHPE during the last MVP Summit. Modest and quiet people they are, but by golly, I felt like a Neanderthal getting a quantum physics 101 lecture by the late professor Hawking when they casually talked about a few of the things they had to overcome. Really impressive.

And of course, there’s this.

Also, fun fact: because my good old Map Mania app still has an ARM package, intended for phones, it gets the native ARM package from the store, and runs very fast on the device. So pay attention kids, but by all means, submit an ARM package when you put your app in the Windows Store. .

So if this is just Windows… how about devices?

One of the most awesome things I like about Windows is that whatever device you plug into it, it works, and nearly instantly. If it does not, you actually have a better chance of having a defective device than Windows not at least eking the basic functionality out of it. I have had… let’s say, other and utterly frustrating experiences with other operating systems. However, the device I have has just one port – an USB-C port. It charges fine with the accompanying charger, but what about other devices?

This is where the fun starts. As a former Windows Phone MVP, I went all the way to the Lumia 950XL, scoring a free Continuum Dock with the phone. Remember this one? Connect a keyboard,a mouse and a monitor to it, plug the other end in your Lumia, and you basically had a kind of PC-from-your-pocket. Turns out Microsoft did not use some proprietary tricks, but apparently just some standard protocol.

I plugged the dock into the device, power in the other end:

Score one – it charged. Then I went a bit …. overboard…

I connected this entire pile of hardware to it. And all of it worked. What you see here, connected simultaneously:

- A Dell monitor connected via DisplayPort (tried the HMDI port too – worked as well)

- Two USB hubs, because I have 3 only USB ports on the dock ;)

- A generic USB key

- A Microsoft Basic Mouse V2

- A Microsoft Natural Ergonomic Keyboard 4000 v1

- An Xiaomi MI 5 Android Phone

- A Microsoft LifeChat LX-3000 headset

- A Microsoft XBox One controller

- A Microsoft Sculpt ergonomic keyboard and accompanying mouse set (via a wireless dongle)

Not on this picture, but successfully tried:

- A HoloLens – it got set up, but I could not connect to the portal via localhost:10080. I have to look into that a little bit more. Also other things work but that’s outside the scope of this article.

- A fairly new Canon DSLR, but I needed that one to take the picture so it’s obviously not in it ;)

I also found the PC actually wants to charge from a Lizone QC series battery, that I originally bought to extend my Surface Pro 4’s battery life on long transatlantic flights. The Windows 10 on ARM PC itself is missing from the picture – that’s because it’s a pre-release device and I don’t want pictures of it to roam around the internet.

Did I find stuff that did not work? In fact, I did:

- I could not get a fingerprint reader that I got for free to work. This is some pre-release device that I got on the summit from a fellow MVP – 1.5 or maybe 2.5 years ago. Although it is set up and recognized, I cannot activate it in the settings screen. Maybe this has something to do with the built-in Windows-Hello-compatible camera of the PC getting priority.

- A wireless dongle for XBox One controllers. Remember the original XBox One controllers did not have Bluetooth in it? This gadget allows you to connect it to PCs anyway. It connects, but nothing is set up. It’s not a big deal, as a controller plugged in via an USB cable works just fine. I suppose this dongle was not sold in large volumes, and probably not at all anymore, as all newer XBox One controllers can be connected via Bluetooth. Only people hanging on to old hardware (guilty as charged) would run into this.

General conclusion

I feel like a broken record, because I keep getting back to this simple fact - it’s just Windows, it will run your apps pretty nicely, it will connect to nearly all of your hardware, and give you a very long battery life. Although, I can imagine battery life might degrade a little if you add this much devices to it’s USB port. But then again, if you need this many devices connected to your PC you might want to rethink what kind of PC you want to buy anyway ;). The point is, you can, and everything but very obscure devices will work.

Now if you would excuse me, I have to clean up an enormous pile of stuff – my study looks like a minor explosion took place in the miscellaneous hardware box.