Intro

In my previous post I wrote about how game objects can be made clickable (or 'tappable') using the Mixed Reality Toolkit 2, and how things changed from MRTK1. And in fact, when you deploy the app to a HoloLens 1, my demo actually works as intended. But then I noticed something odd in the editor, and made a variant of the app that went with the previous blog post to see how things work- or might work- in HoloLens 2.

Debugging ClickyThingy ye olde way

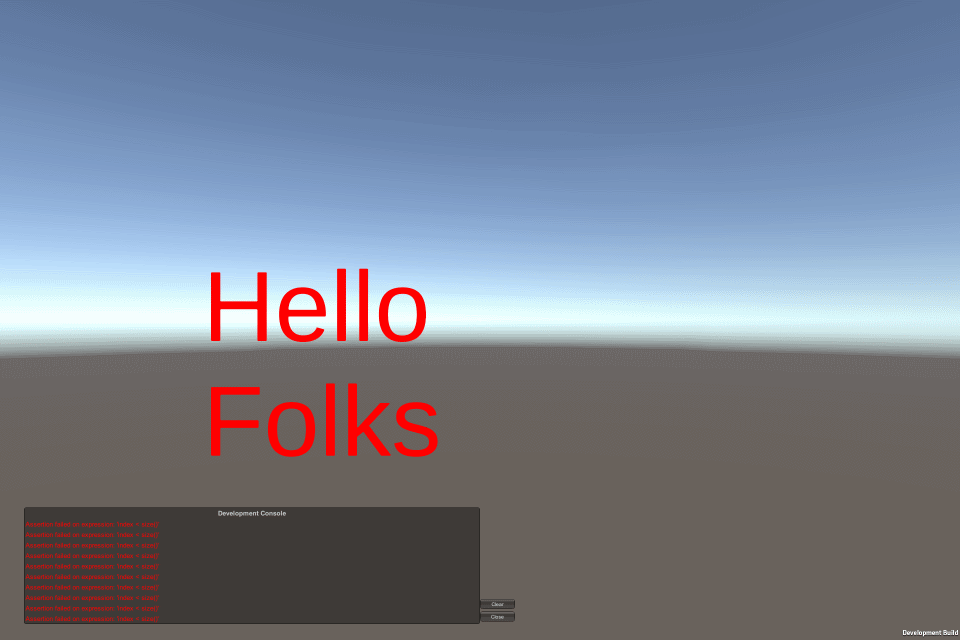

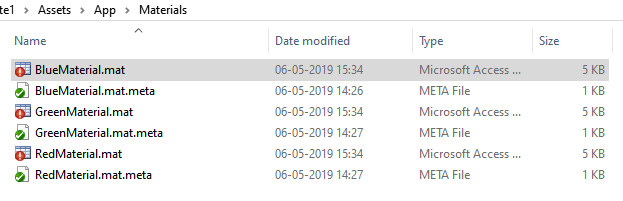

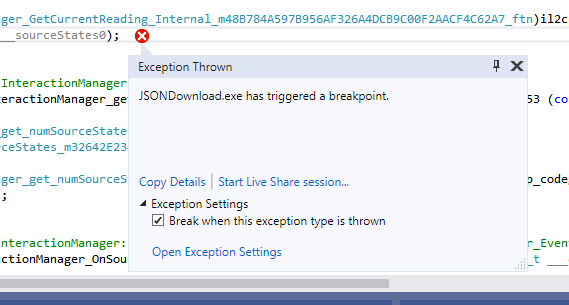

Like I wrote before, it's possible to debug the C# code of a running IL2CPP C++ app running on a HoloLens. To debug using breakpoints is a bit tricky when you are dealing with rapidly firing event - stopping through the debugger might actually have some influence on the order events play out. So I resorted to the good old "Console.WriteLine-style" of debugging, and added a floating text in the app that shows what's going on.

The ClickableThingy behaviour I made in the previous post then looks like this:

using Microsoft.MixedReality.Toolkit.Input;

using System;

using TMPro;

using UnityEngine;

public class ClickableThingyGlobal : BaseInputHandler, IMixedRealityInputHandler

{

[SerializeField]

private TextMeshPro _debugText;

public void OnInputUp(InputEventData eventData)

{

GetComponent<MeshRenderer>().material.color = Color.white;

AddDebugText("up", eventData);

}

public void OnInputDown(InputEventData eventData)

{

GetComponent<MeshRenderer>().material.color = Color.red;

AddDebugText("down", eventData);

}

private void AddDebugText( string eventPrefix, InputEventData eventData)

{

if( _debugText == null)

{

return;

}

var description = eventData.MixedRealityInputAction.Description;

_debugText.text +=

$"{eventPrefix} {gameObject.name} : {description}{Environment.NewLine}";

}

}

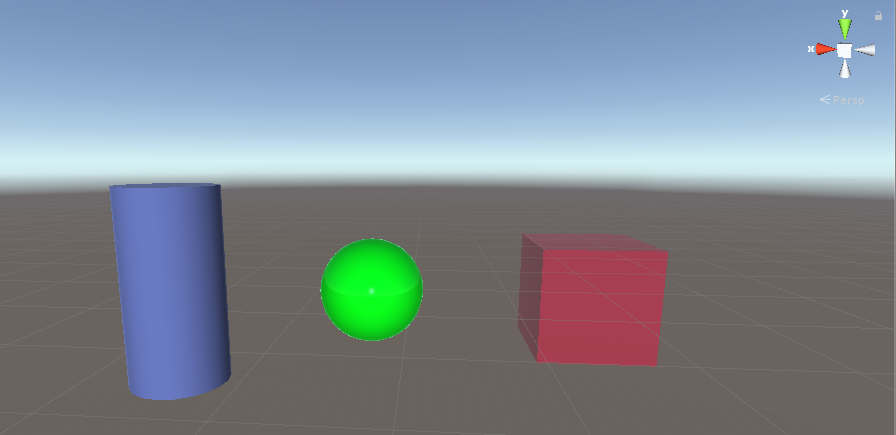

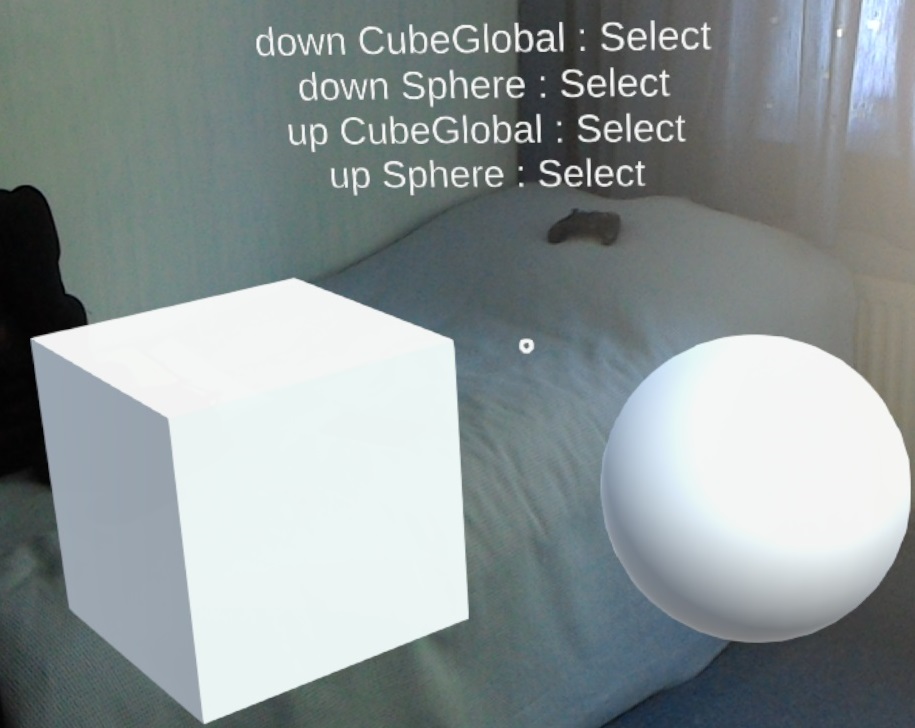

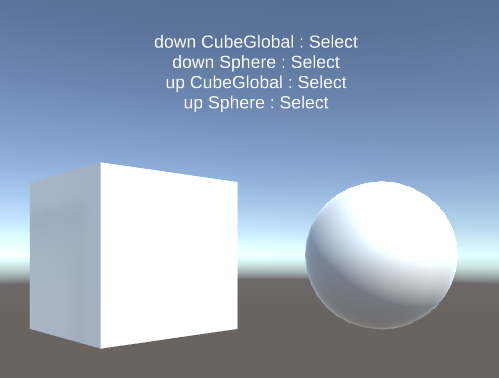

Now in the HoloLens 1, things are exactly like you expect. Air tapping the sphere activates Up and Down events exactly once for every tap (because the Cube gets every tap, even when you don't gaze at it - see my previous post for an explanation)

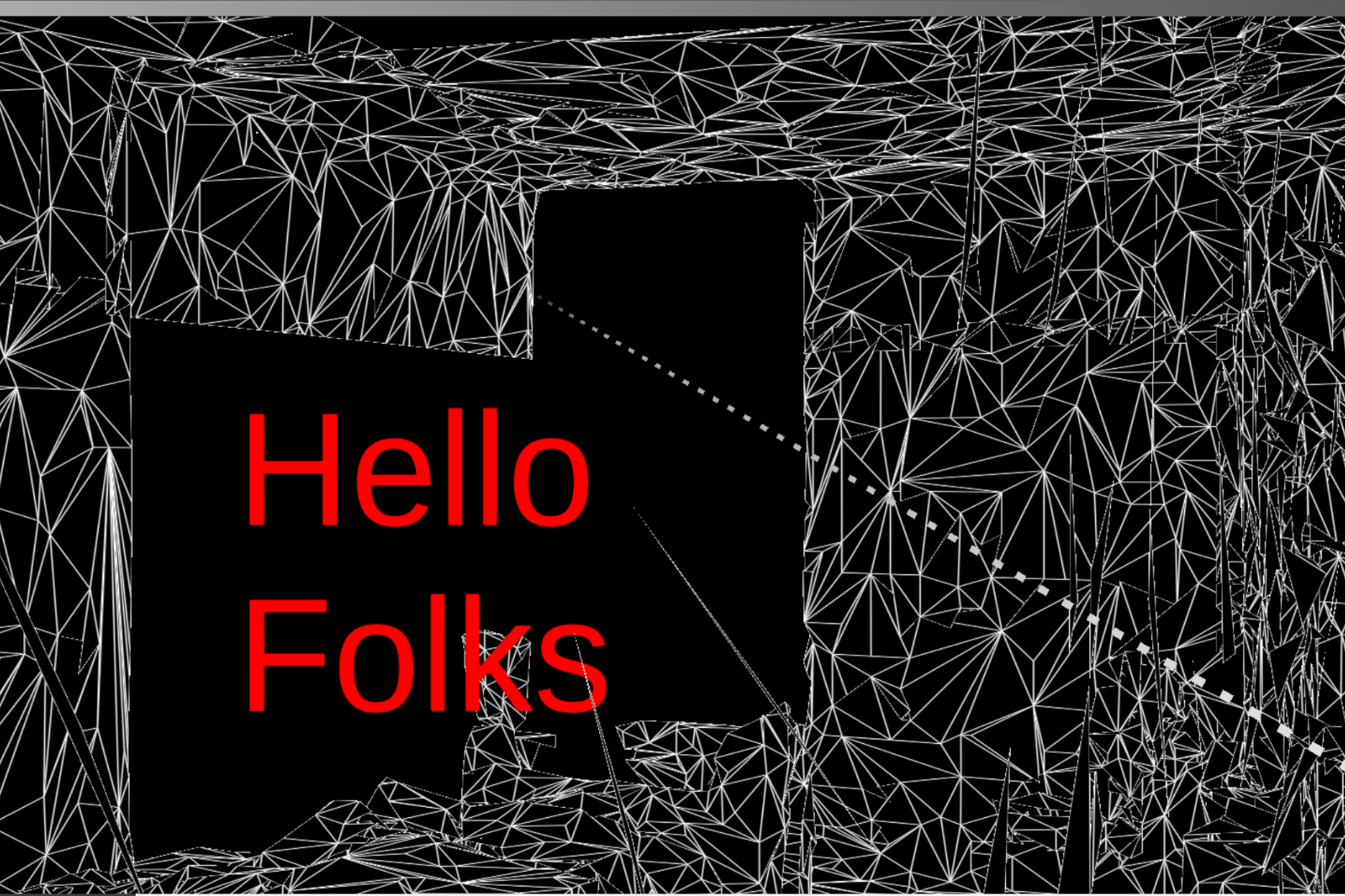

When you run the same code in the editor, though, you get a different result:

Tap versus Grip - and CustomPropertyDrawers

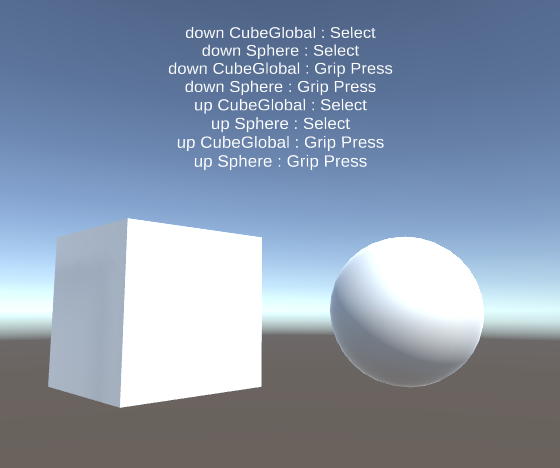

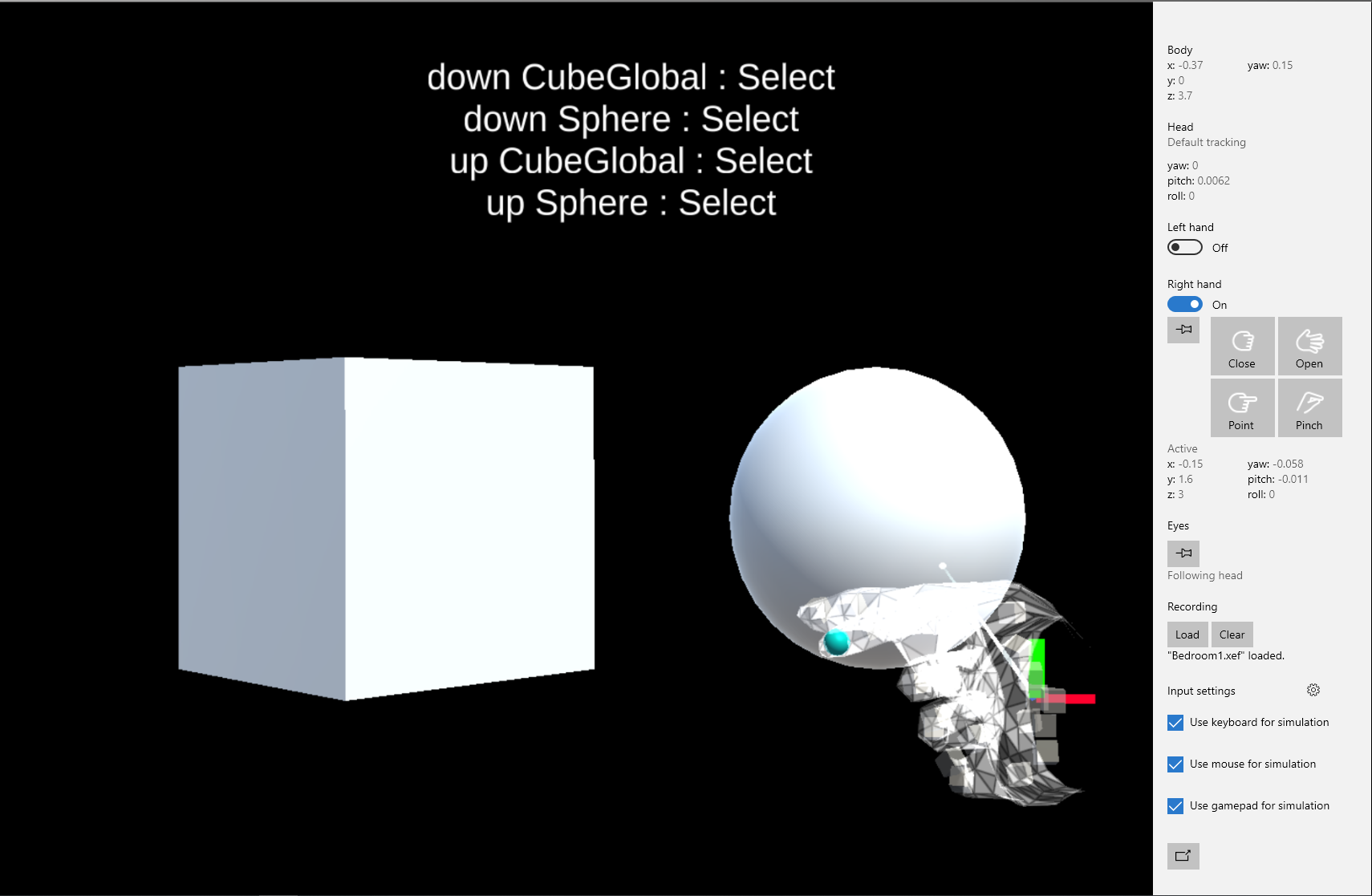

The interesting thing is, when you 'air tap' in the editor (using the space bar and the left mouse button), thumb and index finger of the simulated hand come together. This, now, is recognized as a tap followed by a grip, apparently.

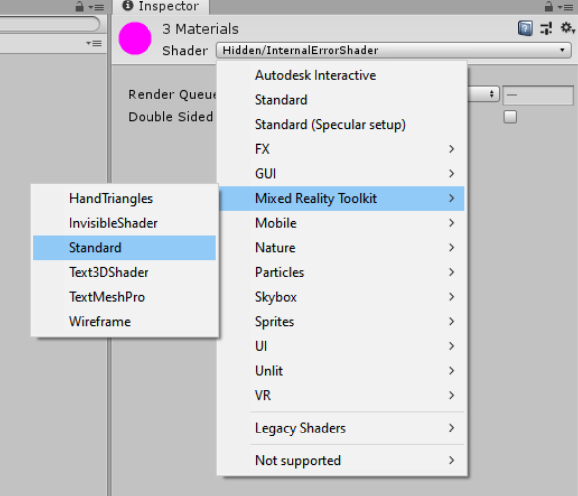

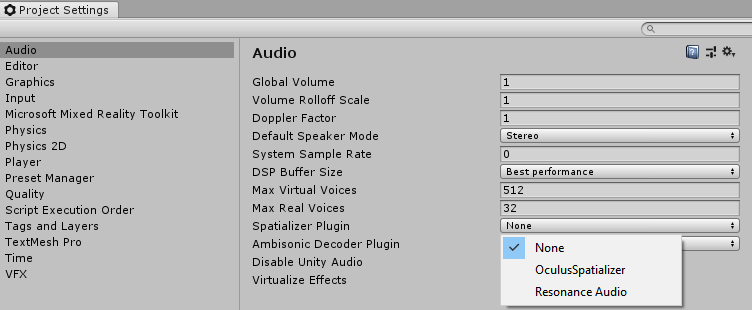

So we need to filter the events coming in through OnInputUp and OnInputDown to respond to the actual events we want. This is where things get a little bit unusual - there is no enumeration of sorts that you can use to compare you actual event against. The available events are all in the configuration, so they are dynamically created.

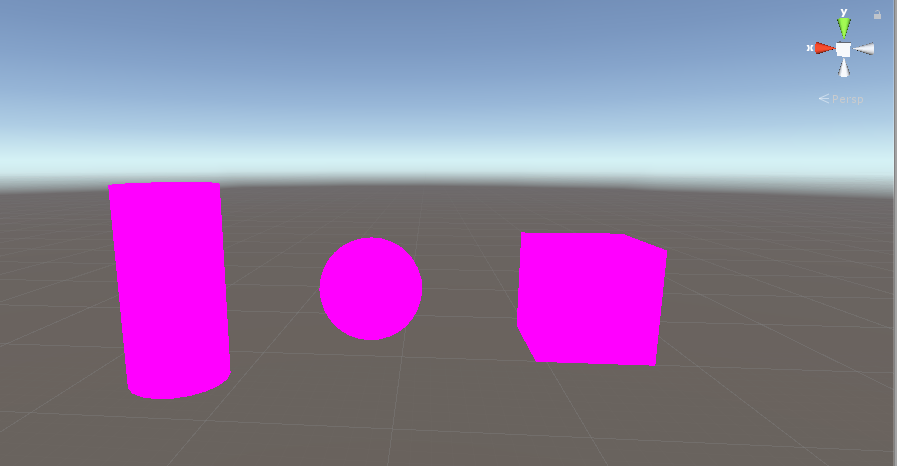

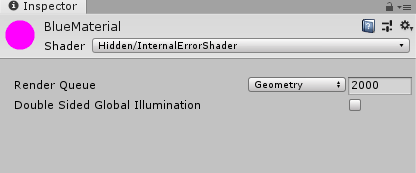

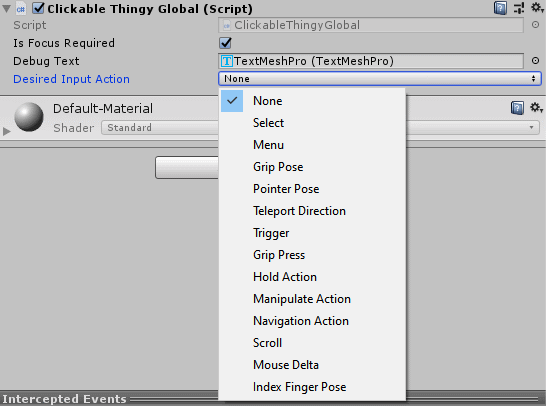

The way to do some actual filtering is to add a property of type MixedRealityInputAction to your behaviour (I used _desiredInputAction). Then the MRTK2 automatically creates a drop down with possible to events to select from:

How does this magic work? Well, the MRTK2 contains a CustomPropertyDrawer called InputActionPropertyDrawer that automatically creates this drop down whenever you add a property of type MixedRealityInputAction to your behaviour. The values in this list are pulled from the configuration. This fits with the idea of the MRTK2 that everything must be configurable ad infinitum. Which is cool but sometimes it makes things confusing.

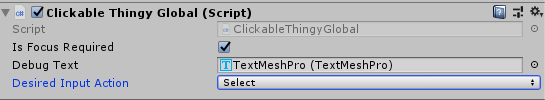

Anyway, you select the event you want to test for in the UI, in this case "Select":

And then you have to check if the event methods if the event matches your desired event.

if (eventData.MixedRealityInputAction != _desiredInputAction)

{

return;

}And then everything works as you expect: only the select event results in an action by the app.

How about HoloLens 2?

I could only test this in the emulator. The odd things is, even without the check on the input action, only the select action was fired, even when I pinched the hand using the control pane:

So I have no idea if this is actually necessary on a real live HoloLens 2, but my friends and fellow MVPs Stephen Hodgson and Simon 'Darkside' Jackson have both mentioned this kind of event type check as being necessary in a few on line conversations (although I then did not understand why). So I suppose it is :)

Conclusion

Common wisdom has it that the best thing about teaching is that you learn a lot yourself. This post is excellent proof of that wisdom. If you think this here old MVP is the end-all and know-all of this kind of stuff, think again. I knew of customer editors, but I literally just learned the concept of CustomPropertyDrawer while I was writing this post. I had no idea it existed, but I found it because I wanted to know how the heck the editor got all the possible MixedRealityInputAction from the configuration and show that in such a neat list. Took me quite some searching, actually - which is logical, if you don't know what exactly you are looking for ;).

I hope this benefits you as well. Demo project here (branch TapCloseLook).

The right way

The right way