Wait a minute – you did handle tap before, right?

Indeed, dear reader, I did. But I also had signed up for a MixUG session on Wednesday July 3. And while making demos for that I learned some other ways to handle interaction. Once again it shows that the best way to learn things is to try to teach them – because the need to explain things induces the need to actually obtain a deeper knowledge.

Ye olde way

In the MRTK 1, it was thus:

- Handle tap – implement IInputClickHandler

- Handle drag – implement IManipulationHandler

- Handle focus – implement

- Handle touch – forget it. ;)

The new way

In the MRTK 2 it is now

- Handle tap – implement IMixedRealityPointerHandler

- Handle drag – see above

- Handle focus – implement IMixedRealityFocusHandler

- Handle touch – IMixedRealityTouchHandler

Now I am going to ignore drag for this tutorial, and concentrate me on tap, focus and touch

IMixedRealityPointerHandler

This requires you to implement four methods:

- OnPointerDown

- OnPointerDragged

- OnPointerUp

- OnPointerClicked

OnPointerClicked basically intercept a tap or an air tap, and will work as such as you deploy the demo project to a HoloLens 1. After being bitten by people tapping just a tiny bit to slow and therefore not getting response, I tend to implement OnPointerDown rather than OnPointerClicked to capture a 'tap' event, but that's a matter of preference.

IMixedRealityFocusHandler

You will need to implement:

- OnFocusEnter

- OnFocusExit

The method names are the same as in MRKT1, only the signatures not - you now get a parameter of type FocusEventData which does give you some more information - by what the object was focused (we have multiple ways of doing that now!), what the previous focused object was, and what the new focused object is.

IMixedRealityTouchHandler

This requires you to implement

- OnTouchStarted

- OnTouchCompleted

- OnTouchUpdated

But there is a twist to that. As we will soon see.

Show-off

To show off how it all works, I have created a little demo project. You can run it either in the emulator or the Unity editor (or a HoloLens 2, if you are in the HoloLens team and part some very few selected parties - I am unfortunately neither).

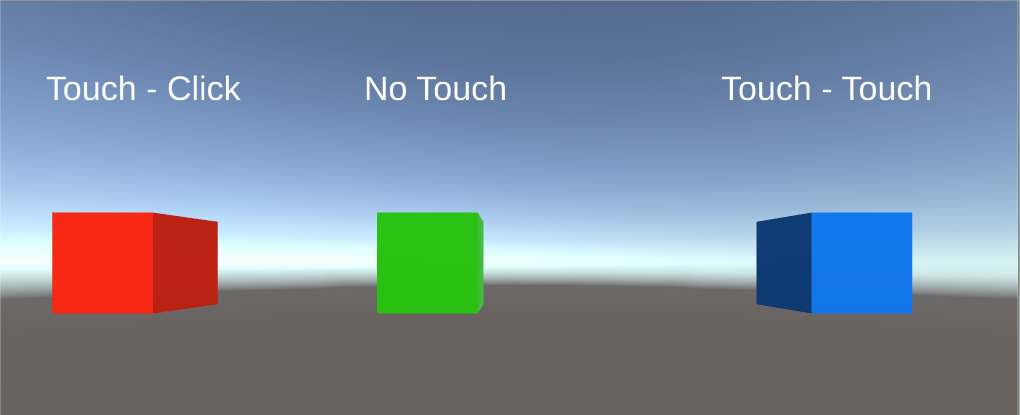

I have created a little script CodedInteractionResponder that shows off how this works. This script implements all the three interfaces I just wrote about. If you open the demo project in Unity show itself like this. All three cubes have the script attached to them.

The text above the cubes will show how much times a cube has been either focused, touched or clicked. I you press play and then the space bar, the right hand will appear (or use ctrl for the left hand). Moving the hand can be done by using the mouse - if you move the hand ray over the cubes it will trigger a focus event, if you tap the left mouse button you will trigger a tap, and if you move the hand towards the cube (using the WASD keys) it will trigger a touch event.

That is to say - you would expect that. But that is not always the case

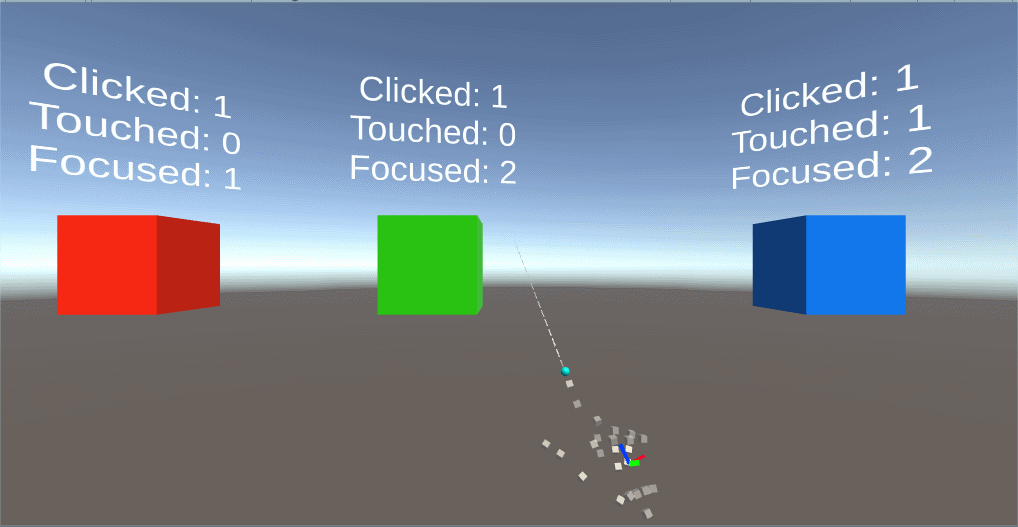

What happens is this:

- You can click or focus the green cube, but you cannot touch it. Nothing happens if you try.

- You can click, focus or touch the red cube, but if you touch it, the number of times it's clicked is increasing. Not the number of touches.

- Only the blue cube works as expected.

Yet they all have the CodedInteractionResponder. How does this compute?

NearInteractionTouchable

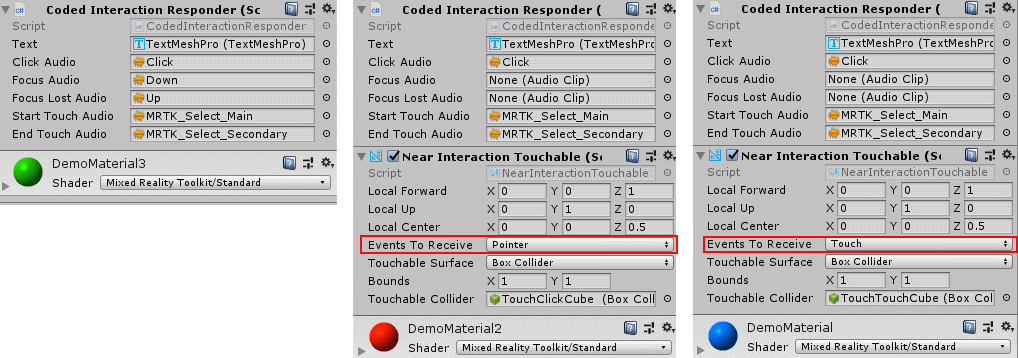

The best way to explain this, is an image showing the bottom half of all the three cubes

The green cube misses the NearInteractionTouchable. This script is necessary to have touch events being fired at all. So unlike IMixedRealityPointerHandler and IMixedRealityFocusHandler, where a mere implementation of the interface will trigger an event, a touch event - that is, methods in IMixedRealityTouchHandler being called - requires the addition of a NearInteractionTouchable script.

And NearInteractionTouchable has another trick up it's sleeve. Suppose you have a button - whether it's (air) tapped or actually touched/pressed, you want to activate the same code. If you change "Events to Receive" from it's default "Touch" to "Pointer" (as I did with the red cube) touching the cube will actually trigger a pointer event. This saves you a few lines of code. So basically NearInteractionTouchable can act as a kind of event router. And this is why the red cube never shows a touch event - but a click event instead.

Be aware NearInteractionTouchable needs a collider to work on. This collider needs to be on the same object the script is on. So if you make an empty game object as a hat stand for a a bunch of smaller game objects, make sure to manually add a collider that envelops all game objects, otherwise the 'touch' won't seem to work.

What, no code?

Yes, there is code, but it's pretty straightforward and if you want to have a look at CodeInteractionResponder, have a look in GitHub. It's actually so simple I felt it a little bit overdone to verbatim repeat parts in this blog post itself.

No comments:

Post a Comment